Introduction: Why A/B Testing Is Critical for Business Growth

A/B testing plays a fundamental role in helping businesses grow by removing uncertainty from decision-making. Without a structured testing process, companies often rely on internal assumptions, stakeholder preferences, or design choices that may not reflect how real users actually behave. This disconnect between internal opinion and user reality is one of the most common reasons why websites underperform despite significant investment in development and design.

One of the most valuable characteristics of A/B testing is its ability to deliver incremental improvements that compound over time. While individual tests may produce modest gains in isolation, those gains accumulate meaningfully across multiple pages and touchpoints. Improving a conversion rate by a few percentage points across a product page, a checkout flow, and a homepage banner simultaneously can result in a substantial uplift in overall revenue without a single additional pound spent on traffic acquisition. This compounding effect is precisely what makes A/B testing one of the most cost-effective optimisation strategies available to ecommerce businesses.

A/B testing also functions as a risk management tool. Before rolling out a significant design change or content update across an entire website, testing it on a smaller segment of your audience first confirms whether it performs as intended. This is particularly important for Shopify stores, where a change to the product page or checkout can have an immediate and measurable effect on revenue. If you are serious about conversion rate optimisation for your Shopify store, A/B testing is not optional. It is the mechanism through which optimisation becomes evidence-based rather than speculative.

What Is A/B Split Testing?

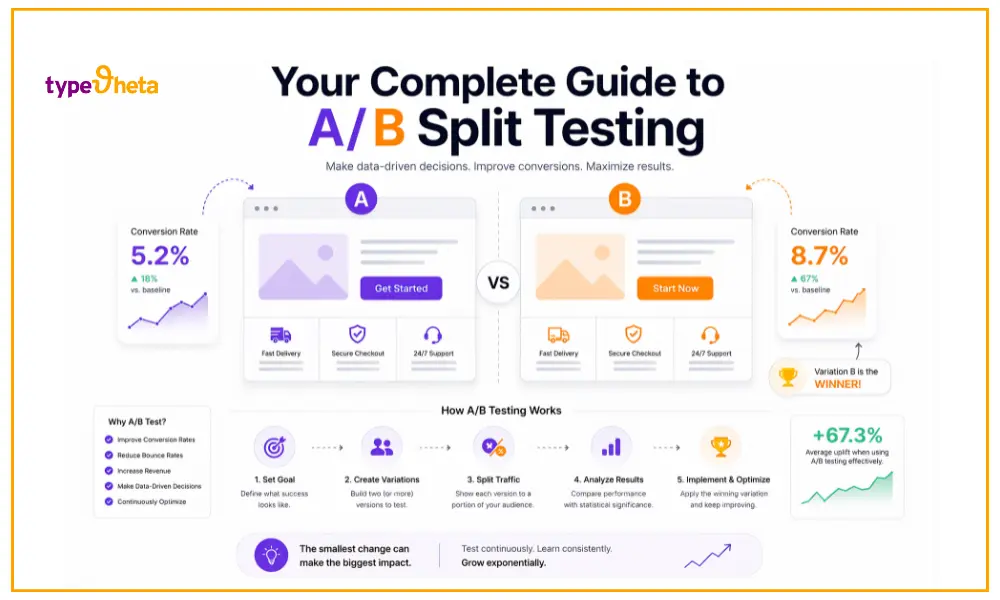

A/B split testing is a method of comparing two versions of a digital asset, whether a webpage, an email, an advertisement, or a product listing, to determine which one performs better against a defined objective. It works by showing version A, the original, to one group of users and version B, a modified version, to a separate group, then measuring how each version performs according to a specific metric such as conversion rate, click-through rate, or average order value.

The strength of A/B testing lies in its ability to isolate variables. By changing a single element at a time, businesses can identify with confidence exactly what is influencing user behaviour. This is fundamentally different from redesigning an entire page and hoping for improvement. When you change one thing and measure the result, you know what worked. When you change ten things at once, you are guessing.

At its core, A/B testing replaces subjectivity with measurable insight. The key components of any well-structured A/B test are as follows:

- Control (Version A): The original version of the page or element, left unchanged throughout the test.

- Variant (Version B): The modified version containing a single specific change.

- Traffic Split: Typically divided equally between both versions, most commonly on a 50/50 basis.

- Primary Metric: The specific measurement used to determine performance, such as conversion rate, click-through rate, or revenue per visitor.

- Outcome: A data-driven decision on which version to implement permanently, based on statistical evidence rather than opinion.

A/B Testing vs Split URL Testing vs Multivariate Testing

These three testing methods are related but serve different purposes, and understanding when to use each one is important for getting the most out of your optimisation efforts.

A/B Testing runs both variations on the same URL, focuses on a single variable, and is relatively quick to implement and analyse. It is the right approach for the vast majority of businesses because it balances simplicity with effectiveness.

Split URL Testing uses entirely separate URLs for each variation, making it better suited to major structural redesigns or significant layout changes. It requires more development effort and careful handling to avoid creating SEO issues. If you are planning a significant site overhaul, it is worth reading about how web development impacts SEO performance before deciding on your testing approach.

Multivariate Testing tests multiple elements simultaneously to understand how different combinations interact with one another. This approach requires considerably higher traffic volumes to reach statistical significance, and the analysis is more complex. For most small and mid-sized ecommerce businesses, multivariate testing is unnecessary. A disciplined programme of sequential A/B tests will deliver comparable learnings with far less complexity.

How to Run an A/B Test: A Step-by-Step Approach

Running an A/B test effectively requires a structured process. Rushing into testing without clear preparation is one of the most common reasons businesses end up with unreliable results and wasted effort. The steps below outline the process in order:

- Define your goal. Identify exactly what you want to improve and why it matters commercially. A clearly defined goal ensures your test has direction and measurable outcomes.

- Form a hypothesis. A strong hypothesis explains why a specific change is expected to improve performance. It should be grounded in data, heatmap analysis, or customer feedback rather than intuition alone.

- Choose the right metric. Select a metric that aligns directly with your goal. Conversion rate works for sales-focused tests. Click-through rate suits engagement-focused ones.

- Build your variant. Make a single change. This ensures that whatever result you observe can be attributed to that one change with confidence.

- Determine sample size and duration. A minimum of two weeks is generally recommended to account for natural variations in user behaviour across different days. Use a sample size calculator before starting.

- Analyse results. Wait until statistical significance is reached before drawing conclusions. Avoid the temptation to stop the test the moment one version appears to be leading.

- Implement and document. Apply the winning variation and record the insights for future use. Every test result, including a failed hypothesis, adds to your understanding of your audience.

What Should You A/B Test?

A/B testing can be applied to almost any element of a website, but focusing on high-impact areas produces the best return on effort. These are the elements most directly connected to user decisions and commercial outcomes.

Headlines and Body Copy

The words you use to describe a product, explain a benefit, or frame an offer have a significant influence on whether a visitor engages or leaves. Testing benefit-driven headlines against curiosity-driven ones, or formal language against conversational language, can reveal a great deal about what motivates your audience to act.

Calls-to-Action

Button text, colour, placement, and size all affect how prominently an action registers in a user’s mind. Consider testing:

- Urgency-based language (“Get yours today”) against neutral language (“Learn more”)

- Button colour and contrast against the surrounding page

- Above-the-fold placement against mid-page placement

- Single CTA against multiple options on the same page

Page Layout and Visual Hierarchy

The order in which users encounter information shapes how they process it. Testing whether placing social proof above the fold improves conversions, or whether a two-column layout outperforms a single-column one, can produce meaningful improvements without changing any copy.

Forms and Checkout Fields

Forms are a consistently productive testing area, particularly on checkout and lead generation pages. Key things to test include:

- Reducing the number of required fields

- Reordering questions to lower perceived effort

- Testing multi-step forms against single-page forms

- Switching required fields to optional ones where commercially appropriate

If you are working on a Shopify store, understanding Shopify payment methods and how checkout configuration choices affect the conversion funnel is worth doing before deciding what to prioritise in your testing plan.

Images and Video

Visual presentation is one of the first things a user processes on landing. Testing product images against lifestyle images, static visuals against short-form video, and different image sizes and placements can influence how emotionally connected a visitor feels with your product. For mobile-first audiences, visual performance has a particularly direct impact on conversions. It is worth reviewing why mobile optimisation is so critical for Shopify stores before designing your visual testing priorities.

Pricing Presentation and Offers

The way a price is displayed influences perceived value significantly. Consider testing:

- “Save 20%” against “£10 off today” for the same promotion

- Monthly versus annual pricing presentation

- Bundle pricing against individual item pricing

- Free shipping thresholds at different order values

Social Proof

Testimonials, review counts, star ratings, and trust badges all influence how confident users feel about completing a purchase. Testing the placement, format, and volume of social proof elements near key decision points, particularly adjacent to add-to-basket buttons and within the checkout flow, regularly produces some of the strongest results in ecommerce conversion testing.

Understanding Statistical Significance

Statistical significance is what separates reliable A/B testing from expensive guesswork. Without it, there is no assurance that the difference observed between your control and variant reflects a genuine improvement rather than natural variation in your traffic.

Several factors influence how quickly statistical significance can be achieved:

- Sample size is the most important factor. The more users included in your test, the more precisely you can measure the difference between versions.

- Effect size matters because a large difference between versions is easier to detect with confidence than a small one.

- Baseline conversion rate plays a role because a lower starting rate requires more traffic and more conversions before results become trustworthy.

- Test duration affects reliability because short tests miss the natural variation in user behaviour that occurs across different days of the week.

The standard target is a 95% confidence level, meaning you can be 95% certain that the result is not due to random chance. Stopping a test the moment results look positive is known as peeking, and it dramatically increases the likelihood of implementing changes that do not actually work in the long run.

A/B Testing for Ecommerce and Shopify

Ecommerce businesses are exceptionally well positioned to benefit from A/B testing because their objectives are measurable and their conversion events are clearly defined. Every improvement to a product page, collection page, or checkout flow has a direct and quantifiable impact on revenue.

Product Pages

Product pages are the natural starting point for most Shopify stores. Effective things to test include image order, description length and format, the visibility and design of the add-to-basket button, and the placement of trust signals and reviews. If your store is underperforming, it is worth understanding the most common reasons why Shopify stores fail before deciding where to focus your testing effort.

Collection Pages

Collection pages play a crucial role in helping users discover relevant products. Testing grid versus list layouts, default product sorting, the prominence of filters, and the amount of information shown per product in the grid can all influence how many users progress from browsing to viewing a specific product.

Checkout Process

The checkout is where the stakes are highest. Every unnecessary field and every moment of friction represents lost revenue. Key areas to test within the checkout include:

- Guest checkout against account-required checkout

- Reducing form fields to the minimum necessary

- Progress indicator design and placement

- Trust signals and security badges at key decision points

If you are planning significant structural changes to your store alongside your testing programme, reviewing how to plan Shopify growth effectively will help you sequence your priorities sensibly.

For merchants looking to scale their testing capability, custom app integrations can significantly accelerate the pace at which insights are generated and implemented, particularly if your testing needs have outgrown the capabilities of standard Shopify apps.

Common A/B Testing Mistakes to Avoid

The theory of A/B testing is straightforward, but the practice is full of pitfalls that reduce its effectiveness or produce misleading results. The most common mistakes to avoid are:

- Stopping tests too early. Results that look convincing after a few days often reverse once the test has run to completion. Always wait for statistical significance.

- Testing multiple variables simultaneously. When several elements change at once, you cannot identify which change drove the result, making the learning impossible to replicate.

- Ignoring mobile behaviour. A change that improves conversions on desktop can sometimes harm them on mobile. Always segment results by device type.

- Relying on opinions instead of data. The entire purpose of A/B testing is to replace assumption with evidence. If decisions are being overridden by stakeholder preference, the testing programme loses its value.

- Not segmenting results. New visitors and returning visitors often behave very differently. Failing to segment can obscure meaningful patterns within the data.

- Failing to document learnings. Without a record of what has been tested and what the result was, teams end up re-testing hypotheses that have already been explored, wasting time and traffic.

Building a Testing Culture

The full value of A/B testing is only realised when it becomes an ongoing, embedded practice rather than a series of one-off experiments. Businesses that treat testing as a continuous process consistently outperform those that treat it as an occasional activity.

Building a testing culture begins with aligning your testing programme to your business goals. Each experiment should connect directly to a commercial objective, whether that is improving conversion rate, reducing cart abandonment, or increasing average order value. Creating a testing roadmap that prioritises experiments by potential impact ensures that effort is focused on the tests most likely to produce meaningful results.

Leadership buy-in matters considerably. Without support from the people who make investment decisions, testing programmes are vulnerable to being deprioritised when other demands arise. Demonstrating the financial value of modest conversion rate improvements, by framing a 2% uplift in terms of its annual revenue impact, is usually the most effective way to secure continued commitment.

Businesses that embed a genuine respect for data across their marketing, product, and commercial teams tend to generate better test hypotheses, because they pay closer attention to what their analytics are already telling them. If your organisation is exploring AI tools to support content and optimisation decisions, reading about AI tools for digital agencies alongside your testing practice is worthwhile, as the two disciplines complement each other increasingly well in 2026.

Final Thoughts

A/B split testing is one of the most consistently effective methods available for improving digital performance and driving sustainable business growth. Its power lies not in any single test, but in the cumulative effect of running regular, well-structured experiments and applying the learnings systematically over time.

For Shopify merchants in particular, the case for testing is especially strong. Clear conversion goals, measurable revenue outcomes, and a platform that supports experimentation through native features and third-party integrations make ecommerce one of the most natural environments in which to build a testing programme. If you are also thinking about how your broader SEO strategy connects to conversion performance, exploring conversion rate optimisation strategies for Shopify brands and understanding how website audits improve both SEO and conversions will give you a more complete picture of how optimisation disciplines work together.

The businesses that grow most reliably through testing are not the ones that find the biggest single win. They are the ones that keep testing, keep learning, and keep improving. That discipline, more than any individual result, is what separates businesses that optimise from those that guess.

FAQs: A/B Split Testing

What is the difference between A/B testing and split testing?

A/B testing typically runs both variations on the same URL, while split URL testing uses separate URLs for each version. Split URL testing is better suited to major structural redesigns, whereas A/B testing is the more practical choice for most page-level optimisation work.

How long should an A/B test run?

Most tests should run for a minimum of two weeks, or until statistical significance at the 95% confidence level is reached, whichever takes longer. Tests run for shorter periods are prone to producing unreliable results that reverse once the full audience has been exposed to the change.

How much traffic is needed for A/B testing?

The required traffic depends on your current conversion rate and the size of the improvement you expect to detect. Higher traffic volumes allow tests to reach statistical significance faster, but meaningful tests can be run on lower-traffic sites provided they are given sufficient time to accumulate enough data.

Can I A/B test on Shopify?

Yes. Shopify supports A/B testing through a range of third-party apps, and an increasing number of built-in features support basic experimentation natively.

What is a good conversion rate uplift from A/B testing?

A 5 to 10% improvement is considered strong, particularly when compounded across multiple pages and sustained over time. Smaller improvements are also valuable if they are consistent and reliable, as the cumulative effect of many modest gains is often greater than a single large one.

Is A/B testing worth it for smaller websites?

Yes. Even with lower traffic volumes, A/B testing provides valuable directional insight and supports gradual, evidence-based improvement. Tests will simply need to run for longer to reach statistical significance, which is a reasonable trade-off for the quality of decision-making it enables.